Last week, it was brought to our attention that Duke Digital Collections recently passed 100,000 individual items found in the Duke Digital Repository! To celebrate, I want to highlight some of the most recent materials digitized and uploaded from our Section A project. In the past, Bitstreams has blogged about what Section A is and what it means, but it’s been a couple of years since that post, and a little refresher couldn’t hurt.

What is Section A?

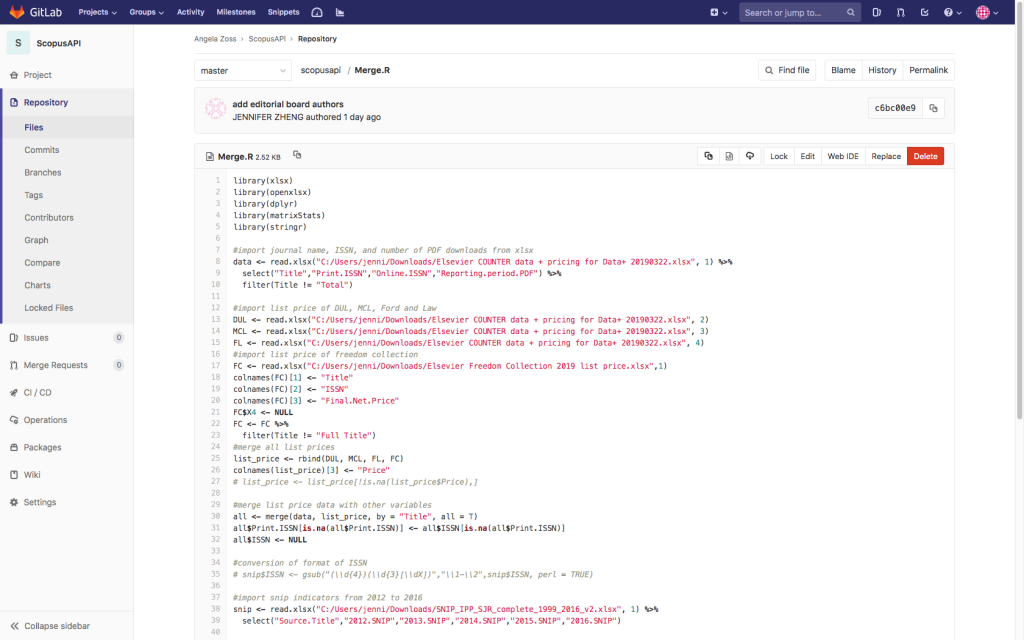

In 2016, the staff of Rubenstein Research Services proposed a mass digitization project of Section A. This is the umbrella term for 175 boxes of different historic materials that users often request – manuscripts, correspondence, receipts, diaries, drawings, and more. These boxes contain around 3,900 small collections that all had their own workflows. Every box needs consultations from Rubenstein Research Services, review by Library Conservation Department staff, review by Technical Services, metadata updates, and more, all to make sure that the collections could be launched and hosted within the Duke Digital Repository.

In the 2 years since that blog post, so much has happened! The first 2 Section A collections had gone live as a sort of proof-of-concept, and as a way to define what the digitization project would be and what it would look like. We’ve added over 500 more collections from Section A since then. This somehow barely even scratches the surface of the entire project! We’re digitizing the collections in alphabetical order, and even after all the collections that have gone online, we are currently still only on the letter “C”!

Nonetheless, there is already plenty of materials to check out and enjoy. I was a student of history in college, so in this blog post, I want to particularly highlight some of the historic materials from the latter half of the 19th century.

Showing off some of Section A

In 1869, after her work as a nurse in the Civil War, Clara Barton traveled around Europe to Geneva, Switzerland and Corsica, France. Included in the Duke Digital Collections is her diary and calling cards from her time there. These pages detail where she visited and stayed throughout the year. She also wrote about her views on the different European countries, how Americans and Europeans compare, and more. Despite her storied career and her many travels that year, Miss Barton felt that “I have accomplished very little in a year”, and hoped that in 1870, she “may be accounted worthy once more to take my place among the workers of the world, either in my own country or in some other”.

Back in America, around 1900, the Rev. John Malachi Bowden began dictating and documenting his experiences as a Confederate soldier during the Civil War, one of many that a nurse like Miss Barton may have treated. Although Bowden says he was not necessarily a secessionist at the beginning of the Civil War, he joined the 2nd Georgia Regiment in August 1861 after Georgia had seceded. During his time in the regiment, he fought in the Battles of Fredericksburg, Gettysburg, Spotsylvania Court House, and more. In 1864, Union forced captured and held Bowden as a prisoner at Maryland’s Point Lookout Prison, where he describes in great detail what life was like as a POW before his eventual release. He writes that he was “so indignant at being in a Federal prison” that he refused to cut his hair. His hair eventually grew to be shoulder-length, “somewhat like Buffalo Bill’s.”

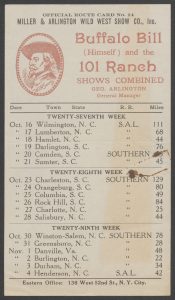

Speaking of whom, Duke Digital Collections also has some material from Buffalo Bill (William Frederick Cody), courtesy of the Section A initiative. A showman and entertainer who performed in cowboy shows throughout the latter half of the 19th century, Buffalo Bill was enormously popular wherever he went. In this collection, he writes to a Brother Miner about how he invited seventy-five of his “old Brothers” from Bedford, VA to visit him in Roanoke. There is also a brief itinerary of future shows throughout North Carolina and South Carolina. This includes a stop here in Durham, NC a few weeks after Bill wrote this letter.

Around this time, Walter Clark, associate justice of the North Carolina Supreme Court, began writing his own histories of North Carolina throughout the 18th and 19th centuries. Three of Clark’s articles prepared for the University Magazine of the University of North Carolina have been digitized as part of Section A. This includes an article entitled “North Carolina in War”, where he made note of the Generals from North Carolina engaged in every war up to that point. It’s possible that John Malachi Bowden was once on the battlefield alongside some of these generals mentioned in Clark’s writings. This type of synergy in our collection is what makes Section A so exciting to dive into.

As the new Still Image Digitization Specialist at the Duke Digital Production Center, seeing projects like this take off in such a spectacular way is near and dear to my heart. Even just the four collections I’ve highlighted here have been so informative. We still have so many more Section A boxes to digitize and host online. It’s so exciting to think of what we might find and what we’ll digitize for all the world to see. Our work never stops, so remember to stay updated on Duke Digital Collections to see some of these newly digitized collections as they become available.