Last Summer, Sean and I wrote about efforts we were were undertaking with colleagues to assess the research and scholarly impact of Duke Digital Collections. Sean wrote about data analysis approaches we took to detect scholarly use, and I wrote about a survey we launched in Spring 2015. The goal of the survey was to gather information about our patrons and their motivations that were not obvious from Google Analytics and other quantitative data. The survey was live for 7 months, and today I’m here to share the full results.

In a nutshell (my post last Summer included many details about setting up the survey), the survey asked users, “who are you,” “why are you here,” and “what are you going to do with what you find here?” The survey was accessible from every page of our Digital Collections website from April 30 – November 30, 2015. We set up event tracking in Google Analytics, so we know that around 43% of our 208,205 visitors during that time hovered on the survey link. A very small percentage of those clicked through (0.3% or 659 clicks), but 20% of the users that clicked through did answer the survey. This gave us a total of 132 responses, only one of which seems to be 100% spam. Traffic to the survey remained steady throughout the survey period. Now, onto the results!

Question 1: Who are you?

Respondents were asked to identify as one of 2 academically oriented groups (students or educators), librarians, or as “other”. Results are represented in the bubble graphic below. You can see that the majority of respondents identified as “other”. Of those 65 respondents, 30 described themselves, and these labels have been grouped in the pie chart below. It is fascinating to note that other than the handful of self-identified musicians (I grouped vocalists, piano players, anything musical under musicians) and retirees, there is a large variety of self descriptors listed.

The results breakdown of responses to question 1 remained steady over time when you compare the overall results to those I shared last Summer. Overall 26% of respondents identified as student (compared to 25% in July), 14% identified as educator (compared to 18% earlier), 9% identified as librarian, archivist or museum overall (exactly the same as earlier), and 51% identified as other (47% in the initial results). We thought these results might change when the Fall academic semester started, but as you can see that was not the case.

Question 2: Why are you here?

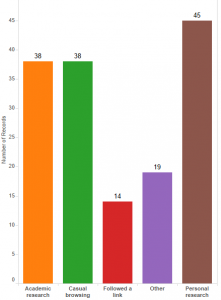

As I said above, our goal in all of our assessment work this time around was to look for signs of scholarly use so we were very interested in knowing if visitors come to Duke Digital Collections for academic research or for some other reason. Of the 125 total responses to question 2, personal research and casual browsing outweighed academic research ( see in the bar graph below). Respondents were able to check multiple categories. There were 8 instances where the same respondent selected casual browsing and personal research, 4 instances where casual browsing was paired with followed a link, 3 where academic research was tied to casual browsing, and 3 where academic research was tied to other. Several users selected more than 2 categories, but by in large respondents selected 1 category only. To me, this infers that our users are very clear about why they come to Duke Digital Collections.

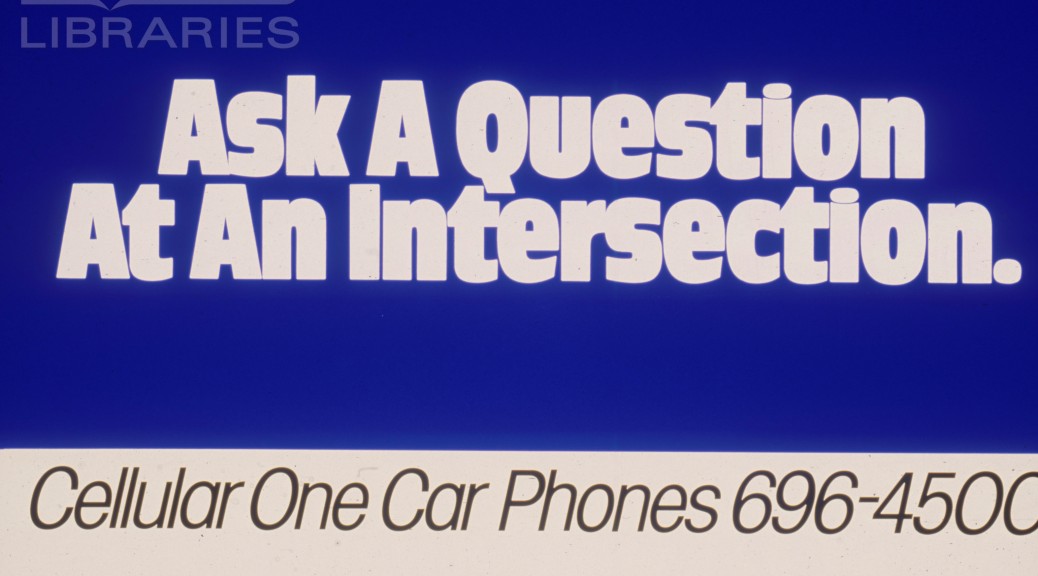

Respondents were prompted to enter their research topic/purpose whether it be academic, personal or other. Every respondent that identified with other filled in a topic, 73% of personal researchers identified their topic, and 63% of academic researchers shared their topics. Many of the topics/purposes were unique, but research around music came up across all 3 categories as did topics related the history of a local region (all different regions). Advertising related topics also came up under academic and personal research. Several of the respondents who chose other entered a topic that suggested that they were in the early phases of a book project or looking for materials to use in classes. To me these seemed like more academically associated activities, and I was surprised they turned up under “other”. If I was able to ask follow up questions to these respondents, I would prompt for more information about their topic and why they defined it as academic or personal. Similarly, if we were designing this survey again, I think we would want to include a category for academic related uses apart from official research.

The results to question 2 also remained mostly consistent since our first view of the results last Summer. Academic research and casual browsing were tied at a 28% response rate each initially, and finished tied at a 30% response rate. The followed a link response rate when down from 17% to an overall 11%, personal research also went down from 44% to 36% overall, and other climbed slightly from 11% to 15% overall.

Question 3: What will you do with the images and/or resources you find on this site?

The third survey question attempts to get at the “now what” part of resource discovery. Following trends with the first two questions, it is not surprising that a majority of the 121 respondences are oriented towards “personal” use (see bar graph below). Like question 2, respondents were able to select multiple choices, however they tended to choose only one response.

Everyone who selected “other” did enter a statement, and of these a handful seemed like they could have fit under one of the defined categories. Several of the write-ins mentioned wanting to share items they found with family and friends assumably using methods other than social media. Five “others” responded with potentially academic related pursuits such as “an article”, “a book”, “update a book”, and 2 class related projects. I re-ran some numbers and combined these 5 responses with the academic publication, teaching tool, and homework respondents for a total of 55 possibly academically related answers or 45% of the total response to this question. The new 45% “academicish” grouping, as I like to think of it, is a more substantial total than each academic topic on its own. I propose this as an interesting way to slice and dice the data, and I’m sure there are others.

Observations

My colleagues and I have been very pleased with the results of this survey. First, we couldn’t be more thrilled that we were successfully able to collect necessary data (any data!). At the beginning of this assessment project, we were looking for evidence of research, scholarly and instructional use of Duke Digital Collections. We did find some, but this survey along with other data shows that the majority of our users come to Duke Digital Collections with a more personal agenda. We welcome the opportunity to make this kind of individual impact, and it is powerful. If the respondents of this survey are a representative sample of our user base, then our patrons are actively performing our collections (we have a lot of music), sharing items with family, friends, and community, as well as using the collections to pursue a wide variety of interests.

While this survey data assures us that we are making individual impacts, it also reveals that there is more we can do to cultivate our scholarly and researcher audience. This will be a long term process, but we have made some short term progress. As a result of our work in 2015, my colleagues and I put together a “teaching with digital collections” webpage to collect examples of instructional use and encourage more. In the course of developing a new platform for digital collections, we are also exploring new tools that could serve scholarly researchers more effectively. With a look towards the longer term, all of Duke University Libraries has been engaged in strategic planning for the past year, and Digital Collections is no exception. As we develop our goals around scholarly use, survey data like this is an important asset.

I’m curious to hear from others, what has your experience been with surveys? What have you learned and how have you put that knowledge to use? Feel free to comment or contact me directly! (molly.bragg at duke.edu)

this sentence was very interesting for “We did find some, but this survey along with other data shows that the majority of our users come to Duke Digital Collections with a more personal agenda.”.

also i work at library of Iranian university and we have always this behavior.

Thanks for this very nice post.